How Much It Cost to Run ChatGPT Per Day?

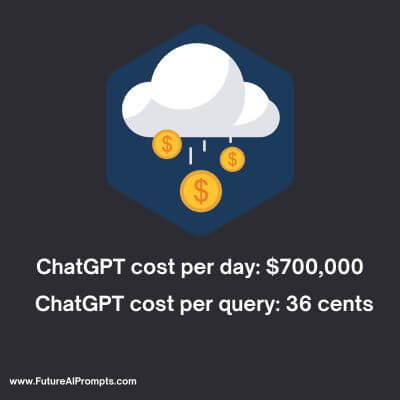

ChatGPT, the popular generative AI chatbot, costs an estimated $700,000 daily to operate, according to a report by SemiAnalysis’ Chief Analyst Dylan Patel.

This simply means that each question answered by the AI costs 36 cents. The report brings light to the high costs of running advanced AI technologies like ChatGPT.

In this article, we will go over the factors contributing to these substantial operating expenses by ChatGPT and detailed breakdown of the cost.

So let’s jump in.

How much does ChatGPT cost per day?

Running ChatGPT costs considerable daily costs and are estimated to be around $700,000 primarily due to compute hardware expenses.

This cost model, however, is subject to numerous variables including the volume of queries, GPU performance, GPU pricing and availability, power and cooling requirements and maintenance costs.

How many GPUs does it take to run ChatGPT? And how expensive is it for OpenAI? Let’s find out! 🧵🤑

— Tom Goldstein (@tomgoldsteincs) December 6, 2022

OpenAI relies on Nvidia GPUs and to maintain its 2023 commercial trajectory, it reportedly needs 30,000 additional GPUs.

Microsoft, a primary OpenAI investor, may develop proprietary AI chips to mitigate these expenses.

It’s estimated that an A100 GPU on Azure costs $3 per hour and each word generated by ChatGPT is $0.0003, making the cost per 30-word response approximately 1 cent.

This suggests OpenAI’s running costs could be $100,000 per day, or $3 million monthly.

Despite free user access to ChatGPT, the costs to OpenAI are substantial due to the complexity of the necessary infrastructure.

This AI chatbot, known for its human-like responses, has raised concerns about its potential to replace jobs such as programming and writing.

However, it is limited by training on models up until 2021.

Despite these challenges, ChatGPT quickly amassed over a million users post-launch, indicating strong public interest and OpenAI’s potential for future growth.

How much does ChatGPT cost per query?

The cost per query is 36 cents for running ChatGPT.

According to Dylan Patel, Chief Analyst at SemiAnalysis, operating ChatGPT costs around $700,000 per day or 36 cents per query.

However, OpenAI charges differently: the GPT-3.5-turbo model costs $0.002 per 1,000 tokens (~750 words), making each query roughly 0.2 cents.

This is for ChatGPT Plus subscribers ($20/month). The cost is likely higher for free users, partially subsidized by OpenAI’s corporate partners like Microsoft.

The costs thus vary significantly based on subscription model and partnership agreements.

How much does ChatGPT cost Microsoft?

Microsoft, a main collaborator and investor in OpenAI Inc., reportedly invested $10 billion in 2019, facilitating projects like ChatGPT via Azure cloud services and possibly proprietary AI chips, Athena.

The undisclosed financial terms suggest Microsoft bears substantial ChatGPT running costs. Microsoft also enjoys exclusive access to GPT-4’s source code for its products.

ChatGPT, hosted on Azure, contributes approximately 3% of hardware costs, implying Microsoft finances a significant part of ChatGPT’s operation.

How much does ChatGPT search cost compared to Google search?

Google’s search engine, financed through advertising, has an estimated cost per query of 2.5 cents based on its average revenue per user (ARPU).

On the other hand, ChatGPT’s estimated cost per query is higher, around 36 cents, making it roughly 14 times more expensive than Google’s per-query cost.

What is the Cost of ChatGPT Researchers & Developers?

AI researchers and developers, with salaries averaging $150,000 and $127,000 at OpenAI and Microsoft respectively, are a significant cost in developing ChatGPT.

An estimated 100 to 200 full-time workers on the project can result in a considerable salary bill, potentially in the millions annually.

Besides base salary, expenses related to benefits, taxes, equipment and operations also add around 30% more.

When combined with other expenditures like compute resources, the total R&D cost for ChatGPT can be considerable.

Daily operating costs given the project’s scale and complexity can go beyond hundreds of thousands of dollars sometimes reaching the million-dollar threshold.

What is the Cost of ChatGPT Computing Resources?

ChatGPT’s operational cost is significant due to the massive computing resources required.

Ranging from hundreds to thousands of dollars per hour, costs vary based on the complexity of tasks, model size and usage duration.

OpenAI employs advanced hardware like Nvidia’s A100 GPUs, utilizing approximately 3,617 HGX A100 servers, equating to 28,936 GPUs.

Each server costs around $200,000, leading to an overall hardware investment of about $723 million.

Furthermore, the power consumption cost is substantial, estimated at around $52,000 per day.

With depreciation expenses, the total daily cost of ChatGPT’s compute hardware is roughly $694,444.

This clearly underscores the immense financial commitment needed to sustain the infrastructure and maintenance of ChatGPT’s computing resources.

What is the Cost of ChatGPT Data Storage & Maintenance?

ChatGPT’s operation significantly depends on substantial data storage and maintenance, both having considerable costs.

The required storage capacity is enormous due to the large datasets used for training, implying major infrastructure costs.

The actual cost depends on the type and volume of storage, plus the cloud service provider’s pricing.

For example, Azure’s Blob Storage charges around $0.0184 per GB per month for hot storage and roughly $0.01 per GB per month for cold storage.

These costs are consequential for ChatGPT, which uses a vast data volume.

In addition to this, data maintenance comprising security, backups, encryption, compression, indexing and processing is key for ChatGPT’s performance and security.

Despite being hard to estimate, these data maintenance procedures significantly contribute to the total expense.

Why is ChatGPT so Expensive to its creators?

ChatGPT is a powerful deep learning model developed by OpenAI and it requires significant computing power and memory to understand and process human language effectively, necessitating the use of powerful servers and high-performance computers.

The volume of daily queries from users necessitates further computing power for quick, accurate responses.

The continuous development and maintenance by OpenAI’s expert team also factor into ChatGPT’s cost.

Storing and managing the vast data used by ChatGPT, while ensuring stringent security measures to safeguard user interactions, also contribute to its overall expenses.

Hence, the high costs are attributed to computational requirements, development and maintenance efforts and data management.

Frequently Asked Questions (FAQs) about Cost to Run ChatGPT by OpenAI

A: Yes, due to the significant computing power required.

A: The GPT-3 training cost was around $5 million. Microsoft invested an additional $11 billion in OpenAI’s project.

A: Its need for substantial server resources, costing up to $700,000 daily. An AI chip is being developed to cut costs.

A: OpenAI charges $20/month for usage on chat.openai.com with the ChatGPT Plus subscription.

A: Approximately 10,000 Nvidia GPUs were used.

I hope this article helped you understand the cost of running and maintaining ChatGPT for OpenAI.

Also read relevant articles on ChatGPT in this website.

See Also:

Can ChatGPT be Detected for Plagiarism?

Who Owns ChatGPT? Things You Need to Know

How to Use ChatGPT Without a Phone Number